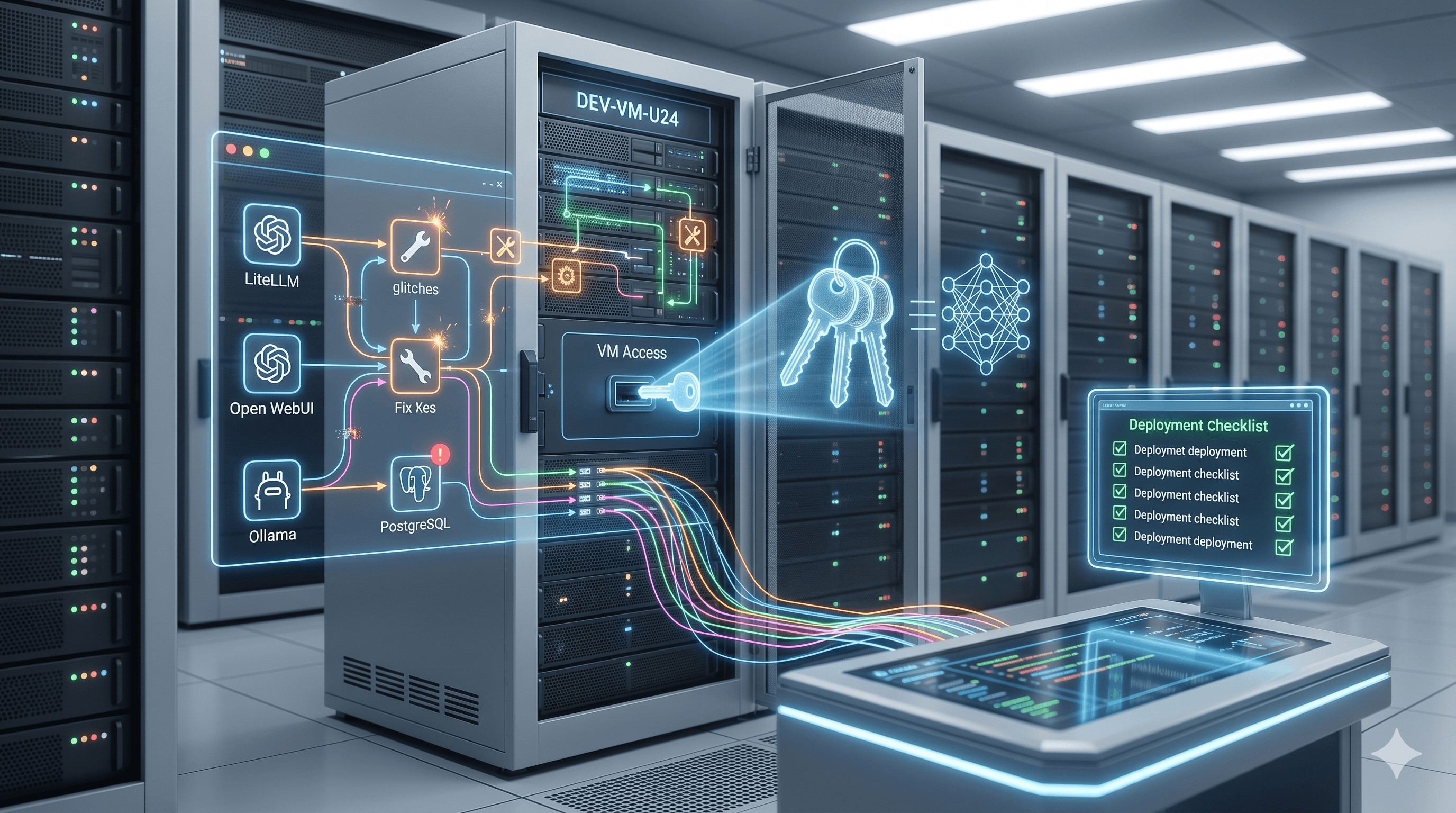

I Handed Claude Code the Keys to a Fresh VM and Walked Away. Here's What Broke.

Lessons learned from letting an AI agent build a self-hosted inference stack and the five configuration traps it missed.

Not "here's what failed catastrophically." The stack actually came up. But there were five things that needed fixing before it was really usable, and at least two of them are traps I'd probly have fallen into manually anyway.

This is the full breakdown: what I was building, how I prompted it, what came out, and where I had to go back in with a screwdriver.

What I Was Actually Trying to Build

A self-hosted AI inference stack on an Ubuntu Server 24.04 VM running inside VMware ESXi 8. CPU-only for now (this is a dev environment, not the homelab box with the RTX 3060). The goal was something I could use to test local models, play with an LLM gateway, and have a real web interface to interact with everything.

Four services, one Docker bridge network:

| Service | Role |

|---|---|

| Ollama | Local LLM runtime |

| LiteLLM | OpenAI-compatible proxy/gateway |

| Open WebUI | Web chat frontend |

| PostgreSQL 16 | Backend database for LiteLLM |

VM specs were intentionally modest:

| Component | Spec |

|---|---|

| OS | Ubuntu Server 24.04 |

| RAM | 32 GB |

| vCPUs | 8 |

| Disk | 150 GB |

| GPU | None (CPU-only) |

| Platform | VMware ESXi 8 |

The architecture I wanted: Open WebUI talks to LiteLLM as its OpenAI-compatible backend. LiteLLM routes to local Ollama models or Ollama Cloud depending on what's selected. Ollama itself has no host port binding — completely internal to the Docker bridge network. Only ports 3000 and 4000 exposed to the outside, UFW locking everything else.

The Prompt Was the Whole Game

Before running anything, I spent real time writing the Claude Code prompt. This ended up being the part that mattered most.

I covered directory structure, secret generation strategy, the full Docker Compose configuraiton, healthcheck logic, UFW rules, and a required credential summary at the end. A few things I was careful about:

Secrets handling. All passwords and API keys generated with openssl rand, stored in a .env file immediately chmod 600'd, never embedded in any config file. LiteLLM's config.yaml uses os.environ/ references throughout. The .env gets passed to containers via Docker's env_file: directive, which injects values as environment variables rather than mounting the file anywhere web-accessible.

Non-interactive execution. I added an explicit execution mode block:

You have full sudo access. Execute every step autonomously without pausing to ask for confirmation, approval, or clarification. Do not prompt the user at any point during the run. Treat every step as pre-approved.

Without this, Claude Code gates on nearly every tool use. File writes, sudo commands, service restarts — all of it. That one paragraph is the difference between a fully autonomous run and sitting there clicking "approve" for twenty minutes.

Network isolation. All four containers on a single internal Docker bridge network called ai-net. They talk to each other by service name. Ollama intentionally has no host port binding. The only way to reach it from outside the Docker network is through LiteLLM.

What the Autonomous Run Actually Did

I ran the prompt and left it alone. The sequence, without any input from me:

Installed Docker Engine and the Compose plugin from the official Docker apt repo

Created

/opt/ai-stack/with the full directory structureGenerated all secrets with

openssl randWrote

.env, immediatelychmod 600'd it, added it to.gitignoreWrote

litellm/config.yamlusingos.environ/references (no hardcoded secrets)Created

prometheus/prometheus.ymlas a required placeholder file (if this doesn't exist as a file, Docker creates it as a directory and the compose fails)Wrote

docker-compose.ymlwith all four services, healthchecks, and dependency orderingConfigured UFW: SSH rule first, then enabled with

--forceRan

docker compose pullthendocker compose up -dVerified each service

Printed a full credential summary

One unattended session. No prompts, no approvals, no intervention.

The Architecture That Came Out

Internet

│

├── :3000 ──► Open WebUI

└── :4000 ──► LiteLLM Proxy

│

┌───────┴────────┐

│ │

Ollama PostgreSQL

(internal only) (internal only)

All containers on Docker bridge network: ai-net

Open WebUI doesn't talk to Ollama directly. It connects to LiteLLM as its OpenAI-compatible backend. LiteLLM routes to local Ollama models or Ollama Cloud depending on which model is selected. Everything goes through the gateway, so you get key management and spend tracking even on local model requests.

Where It Landed: Mostly Solid, Five Fixes Required

The core infrastructure was genuinely solid. Containers came up in the correct order, health checks resolved, the dependency chain worked as intended (Open WebUI waits for LiteLLM healthy, LiteLLM waits for Postgres healthy), UFW was configured correctly, and the credential summary printed cleanly at the end.

Verified externally: API keys and passwords not exposed, .env not web-accessible, secrets injected as environment variables rather than mounted files, nothing sensitive showing up in HTTP responses from either service. That part worked exactly as designed.

The five issues that needed fixing weren't fundamental architecture problems. They were configuration details, three of which are actually useful things to know regardless of how you built the stack.

Fix 1: LiteLLM Healthcheck Endpoint and Tooling

The original compose file used /health with curl for the LiteLLM healthcheck. Two problems with that:

/healthon LiteLLM requires an API key. Without one, it returns401 Unauthorized, which Docker interprets as a failed healthcheck.curlisn't installed in the LiteLLM Docker image.

The fix: switch the endpoint to /health/liveliness (no auth required) and replace the curl command with a Python one-liner using urllib:

healthcheck:

test: ["CMD-SHELL", "python3 -c \"import urllib.request; urllib.request.urlopen('http://localhost:4000/health/liveliness')\""]

interval: 15s

timeout: 10s

retries: 5

start_period: 30s

This one had the biggest impact. Open WebUI's depends_on condition is service_healthy for LiteLLM. Broken healthcheck means Open WebUI never starts. Fix the healthcheck and everything downstream resolves.

Fix 2: ENABLE_OLLAMA_API Was Set to False

The initial config had ENABLE_OLLAMA_API: "false" on the Open WebUI service. Local Ollama models pulled into the container weren't showing up in the model selector at all, only LiteLLM-proxied models.

Setting it to "true" gives Open WebUI a direct connection to Ollama for listing and running local models, while still using LiteLLM as the gateway for API-keyed requests. Both paths active simultaneously.

Fix 3: DATABASE_URL Leaking Into Open WebUI

This was the trickiest one, and honestly the most useful thing in this whole post.

Using env_file: .env on a service in Docker Compose passes all variables in that file to the container. Every one. If multiple services share the same .env, anything in there goes to all of them.

DATABASE_URL (LiteLLM's PostgreSQL connection string) was in the .env file. Open WebUI picked it up, tried to connect to LiteLLM's Postgres instance, and crashed immediately on missing tables — it was looking for calendar_event and others that exist in Open WebUI's own schema, not LiteLLM's. The web UI stopped loading entirely.

The fix: remove DATABASE_URL from .env entirely. Set it inline on the LiteLLM service only:

litellm:

environment:

DATABASE_URL: postgresql://litellm:${POSTGRES_PASSWORD}@postgres:5432/litellm

Open WebUI then correctly fell back to its default SQLite database. Any admin account created during the misconfiguration was in the wrong database and was gone after the fix, so a fresh account had to be created. Minor, but worth knowing ahead of time.

This is actually a Docker pattern worth keeping in mind well outside of this specific stack. Shared .env files and service-specific secrets don't mix cleanly. Anything that's genuinely owned by one service should be set inline on that service, not in the shared file.

Fix 4: Ollama Cloud api_base Wrong

When I added Ollama Cloud models to LiteLLM after the initial setup, the api_base I used was wrong. LiteLLM's OpenAI provider appends /chat/completions to whatever api_base you give it. The path needs to already include /v1:

| What I used | What LiteLLM constructed | Result |

|---|---|---|

https://ollama.com |

https://ollama.com/chat/completions |

404 |

https://ollama.com/api |

https://ollama.com/api/v1/chat/completions |

404 |

https://ollama.com/v1 |

https://ollama.com/v1/chat/completions |

200 |

The correct config.yaml entry:

- model_name: my-cloud-model

litellm_params:

model: openai/gpt-oss-120b

api_base: https://ollama.com/v1

api_key: os.environ/OLLAMA_API_KEY

Fix 5: Ollama Is in Docker — Its CLI Is Too

This one's on me. After setup I ran ollama pull llama3.2 on the host and got a command not found error. The Ollama binary isn't on the host because Ollama is running inside a container.

All Ollama operations in a Dockerized install go through docker exec:

# Pull a model

docker exec -it ollama ollama pull llama3.2

# List models

docker exec ollama ollama list

No functional impact. Just operator awareness, and the kind of thing you forget in the moment.

Adding Models to the Stack

Once you've got a model pulled, getting it into LiteLLM and visible in Open WebUI is three steps:

Step 1 — Pull the model into the Ollama container:

sudo docker exec -it ollama ollama pull llama3.2

Step 2 — Add it to /opt/ai-stack/litellm/config.yaml:

model_list:

- model_name: llama3.2

litellm_params:

model: ollama/llama3.2

api_base: http://ollama:11434

Step 3 — Restart LiteLLM:

sudo docker compose -f /opt/ai-stack/docker-compose.yml restart litellm

It'll appear in Open WebUI's model selector. Local Ollama models also show up directly in Open WebUI via the Ollama connection (bypassing LiteLLM) because ENABLE_OLLAMA_API is enabled.

What I Actually Took Away From This

Prompt quality determines output quality, full stop. The reason this went as smoothly as it did is that the prompt was thorough. Directory structure, secret generation, network topology, healthcheck logic, UFW ordering — all of it specified explicitly. Gaps in the prompt become gaps in the output. That's not a Claude Code problem, that's just how agentic automation works.

The non-interactive directive is not optional. Without it you're not running autonomous infrastructure work, you're running a slightly faster manual process.

The env_file scope issue is the most interesting one to me, because it's not obvious until it bites you and it has nothing to do with AI-generated configs specifically. It's a Docker behavior. Worth knowing.

90% correct on first run for a full four-service stack with network isolation, secrets management, and firewall configuration is genuinely good. The five issues were all configuration details. Nothing had to be rebuilt. The services came up, the network worked, the secrets were handled correctly, and the firewall was sane.

For a CPU-only dev environment on a clean VM with no prior configuration, I'll take that result.

The Final Stack

| Service | Access | Purpose |

|---|---|---|

| Open WebUI | :3000 |

Chat interface |

| LiteLLM Admin UI | :4000/ui |

Key management, spend tracking |

| LiteLLM API | :4000 |

OpenAI-compatible gateway |

| Ollama | Internal only | Local model runtime |

| PostgreSQL | Internal only | LiteLLM database |

Firewall: ports 22, 3000, and 4000 open. Everything else blocked inbound.

Secrets: generated with openssl rand, stored in chmod 600 .env, injected as environment variables. Not web-accessible.

Stack: Ollama + LiteLLM + Open WebUI + PostgreSQL | Docker Compose | Ubuntu Server 24.04 | VMware ESXi 8 | CPU-only