A Roblox Cheat Script Took Down Vercel. Here's the Full Kill Chain.

How a game cheat script, an over-permissioned OAuth grant, and unencrypted environment variables turned one developer's bad download into an enterprise-wide breach -- and what your security team needs to do before the same chain reaches you.

Someone at a small AI company wanted to automate their Roblox farming. They downloaded an executor script from some sketchy corner of the internet, the way developers do when they think their personal machine is their own business. Lumma Stealer was bundled in. Browser credentials got vacuumed up. OAuth tokens walked out the door.

Two months later, Vercel -- the platform that hosts and deploys web applications for thousands of enterprise teams, and the company that built Next.js -- was confirming a breach. Google Mandiant was engaged. Law enforcement was notified. Stolen data went up on BreachForums with a $2 million asking price.

That's the chain. One game cheat script to a major cloud platform compromise. No zero-days. No sophisticated phishing campaign targeting a privileged admin. Just a bad download, a poorly scoped OAuth grant, and a misconfigured Google Workspace environment that let one employee's personal decision become an enterprise-wide exposure.

I want to walk through this one carefully because every link in this chain is sitting in your environment right now, probly without anyone having audited it.

The Timeline

The public disclosure happened April 19, 2026. But the initial access was February.

February 2026: A Context.ai developer downloads what looks like a Roblox auto-farm executor. Lumma Stealer deploys. The infected machine leaks Google Workspace credentials, session cookies, OAuth tokens, and keys for Supabase, Datadog, and Authkit. The support@context.ai account is in the harvest. Hudson Rock had this data sitting in their cybercrime intelligence database a month before any of this became public.

March 2026: Attacker uses the stolen credentials to breach Context.ai's AWS environment. Context.ai detects it, brings in CrowdStrike, shuts down the compromised infrastructure. Incident gets logged. Investigation gets closed. What nobody caught: the attacker had also pulled OAuth tokens from Context.ai's consumer users during the same access window.

April 17-19, 2026: The compromised OAuth token gets used to access a Vercel employee's Google Workspace account. From there, the attacker moves laterally into Vercel's internal environments, enumerates environment variables not marked as sensitive, and grabs what they came for.

April 19, 2026, 02:02 ET: BreachForums post goes up under the ShinyHunters name. Internal DB, employee accounts, GitHub tokens, npm tokens, source code fragments, activity timestamps. $2M. Separately, a $2M ransom demand lands via Telegram. (It's worth noting that actual ShinyHunters members denied involvement to BleepingComputer -- this may be a copycat. Doesn't change the confirmed scope of the breach.)

April 19-20, 2026: Vercel publishes their security bulletin. CEO Guillermo Rauch posts the attack chain publicly. Mandiant is named as the IR partner. The IOC gets published: OAuth App Client ID 110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com. Vercel's services stay operational. Next.js and Turbopack supply chain is analyzed and believed to be clean.

The Actual Failure Points

There are three places this could have been stopped. None of them required exotic tooling.

Failure 1: Shadow AI via OAuth

Context.ai builds enterprise AI agents that plug into your company's institutional knowledge, workflows, and communication tools. To do that, it needs OAuth access to Google Workspace. Makes sense for a sanctioned enterprise deployment.

But the Vercel employee who triggered this wasn't using a company-provisioned Context.ai account. They signed up for the AI Office Suite consumer product using their Vercel enterprise email. Then they clicked "Allow All" on the OAuth permissions prompt, because that's what the UI asked them to do and they wanted the product to work.

Vercel's internal Google Workspace configuraiton allowed that action to propagate broad permissions at the enterprise level, not just the individual account. One employee's personal tool adoption became an enterprise OAuth grant.

This is the AI shadow IT problem. It's not a developer downloading unapproved software onto their endpoint anymore. It's a developer authenticating a third-party AI agent against your identity provider using their work credentials, with zero visibility from your security team, and it's happening constantly. Every AI productivity tool with an "Add to Google Workspace" button is a potential entry point.

Failure 2: OAuth Scope Was Never Governed

Google Workspace has controls for this. Admins can restrict which third-party apps can request which scopes. They can require admin approval before any new OAuth app is authorized. They can block broad grants entirely and require explicit justification for anything beyond read-only calendar access.

Those controls weren't in place in a way that caught this. A consumer-tier AI product was able to request and receive deployment-level Google Workspace permissions against an enterprise tenant.

The Vercel IOC gives you something concrete to hunt for right now:

Admin Console → Security → API Controls → App Access Control

Search for OAuth Client ID: 110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com

If that app shows up in your tenant, revoke it and start your incident response. If it doesn't, you still have work to do -- because there are other apps in that console that you've never reviewed.

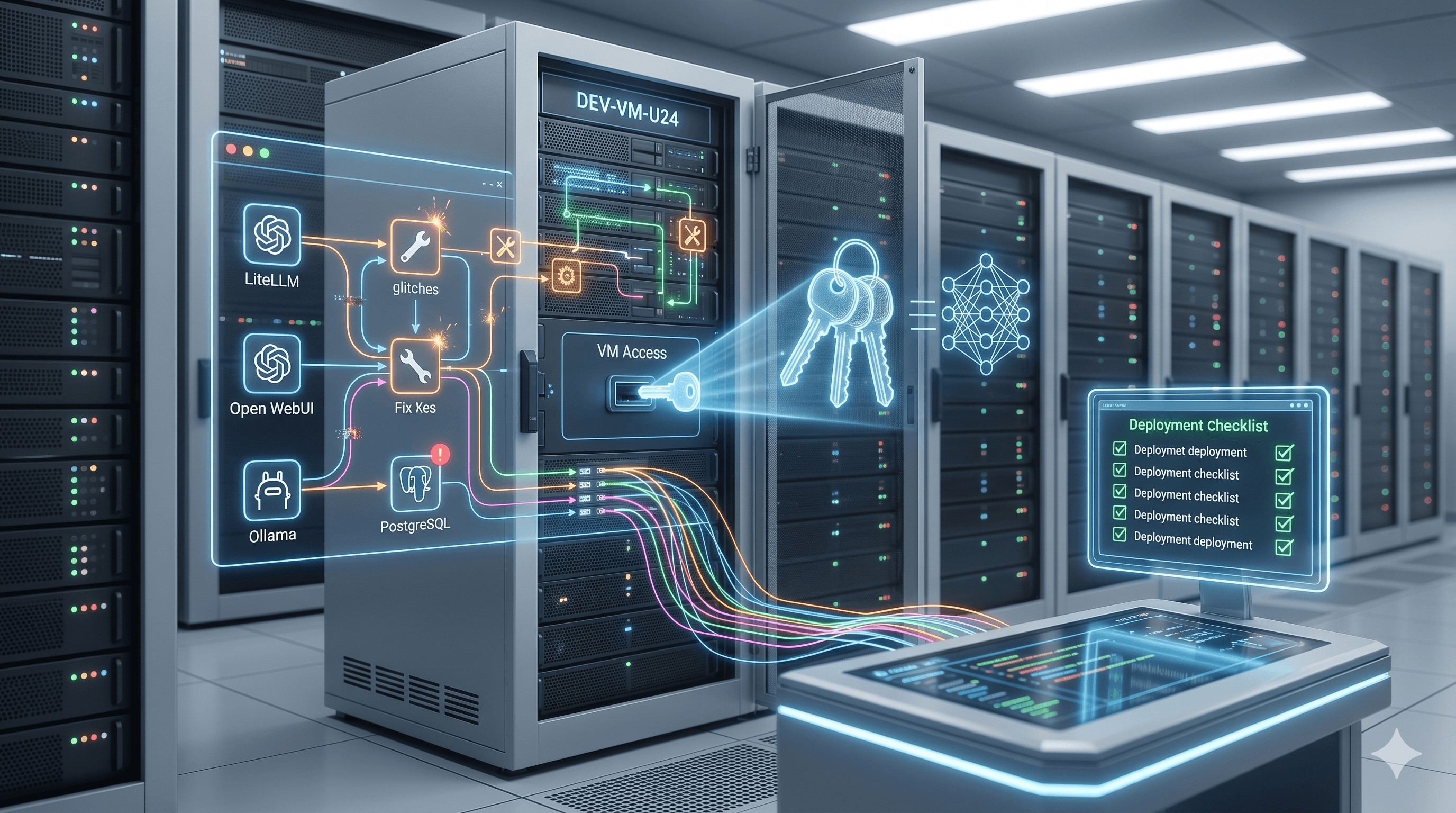

Failure 3: Environment Variables Aren't Secrets Management

Vercel distinguishes between "sensitive" and "non-sensitive" environment variables. Sensitive variables get encrypted at rest in a way that prevents them from being read back. Non-sensitive variables don't.

The attacker accessed the non-sensitive ones. And here's the problem with that designation: developers store API keys, database connection strings, deployment tokens, and auth secrets in environment variables as a matter of routine. Whether something gets flagged as sensitive depends on whoever created the variable, following whatever internal convention the team agreed on, consistently, across every project.

That's not a security control. That's an honor system with production credentials.

The variables Vercel described as non-sensitive almost certainly contained credentials with lateral pivot potential into downstream services. That's exactly how these attacks compound -- you pull an unencrypted environment variable, it contains an AWS access key or a database URL, and now you're somewhere else entirely.

What the AI Supply Chain Actually Means

Traditional supply chain security is about code. Malicious npm packages. Compromised build pipelines. Backdoored open source dependencies. You can scan for those. You can pin to known-good hashes. You can run SAST against them.

The AI supply chain doesn't work that way. It operates through identity and authorization. The attack surface is every OAuth grant your employees have made to every AI tool they've connected to their work accounts. You can't scan it. You can't hash it. You can't catch it at the perimeter.

What you can do is inventory it.

Pull every third-party OAuth application that's been authorized against your Google Workspace or Microsoft 365 tenant. You will find things you didn't know existed. AI writing tools, AI meeting summarizers, AI document editors, AI scheduling assistants -- all of them connected to your identity provider, many of them granted broader scopes than they needed, most of them authorized by individual employees without security review, all of them backed by companies with security postures you've never assessed.

At least in my case, when I ran this audit for the first time in our environment, the list was longer and weirder than I expected. Tools I'd never heard of connected to accounts that had no business connecting to them.

The Response Playbook

The community IR playbook for this incident is solid. Here's the condensed version.

Immediate (do this today)

Rotate Vercel secrets. If your org uses Vercel, rotate everything -- not just what you think might have been exposed. Conservative exposure window is April 1 through April 19 at minimum. Pay particular attention to:

GitHub integration tokens

Linear integration tokens

Deployment protection tokens

Any environment variable that touches a payment processor, database, auth provider, or cloud IAM

Check your Google Workspace for the IOC. Instructions above. If it's there, you're in incident response mode. If it's not, keep going with the audit anyway.

Pull your CloudTrail and auth provider logs for the exposure window. Look for unusual API calls, GetObject bursts against S3, CreateUser or AttachUserPolicy events, console logins from new ASNs or geographies. Same thing in your database audit logs -- unexpected SELECT *, large exports, connections from unknown IPs.

Medium-Term (next 30 days)

Build an AI tool approval workflow. Any AI tool that requests OAuth access to enterprise systems needs to go through a review before it gets authorized. This applies to IT-provisioned tools and to the one your developer added last Tuesday using their work Gmail because it looked useful. Shadow AI is now a primary attack surface and needs a governance process.

Enforce least-privilege OAuth scopes at the tenant level. Work with your Google Workspace or M365 admin to restrict what scopes third-party apps can request. Calendar tools don't need Drive access. Document tools don't need admin API access. Default-deny on broad grants, explicit approval required.

Move secrets to an actual secrets manager. HashiCorp Vault, AWS Secrets Manager, Azure Key Vault. The migration is a real project. It's also significantly cheaper than the incident response retainer you'll need after the breach that happens because you didn't do it. Encryption at rest, access logging, automatic rotation, audit trails -- none of that exists in raw environment variable storage.

Run the full OAuth inventory. Not just Google Workspace. Okta, GitHub, Slack, Notion, Linear, Jira -- anywhere employees authenticate third-party tools. Every one of those integrations is a dependency on someone else's security posture.

Stand up ITDR. Identity Threat Detection and Response -- correlating sign-ins, permission changes, and audit events across your identity provider to surface account takeovers before they become lateral movement. This attack pattern is exactly what ITDR is designed to catch. If you don't have it, add it to the roadmap.

The Part That Actually Concerns Me

Vercel CEO Guillermo Rauch described the attacker as "highly sophisticated based on their operational velocity and detailed understanding of Vercel's systems" and suggested the activity may have been significantly AI-accelerated.

Think about that for a second. An attacker using AI tooling to enumerate internal systems, understand architecture, and move laterally faster than a human IR team can track. The same kind of AI-assisted workflow that's making developers more productive is apparently making threat actors more productive too, and they've had longer to figure out how to use it offensively.

The breach detection to containment window is getting shorter on the attacker's side. That means the detection and response capability on the defender's side needs to keep up. Manual processes and periodic audits aren't going to cut it when the attacker is operating at machine speed.

A Note for Public Safety and Critical Infrastructure

I work in 911 dispatch. I see CAD systems, computer-aided dispatch infrastructure, and operational technology management platforms that increasingly use cloud-hosted deployment pipelines and modern developer tooling. I've also watched the AI productivity tool adoption curve hit our industry without anything resembling a corresponding security governance curve.

The downstream consequences of a compromised environment variable connected to an emergency communications platform are different in kind from a compromised DeFi frontend. The controls are identical. The urgency is higher.

If you're in critical infrastructure, utilities, public safety, or any environment where operational disruption has consequences beyond financial damage -- run the OAuth audit. Enforce scope restrictions. Treat every AI tool adoption as a third-party vendor onboarding event, because that's exactly what it is.

Written April 20, 2026. This incident is under active investigation. Vercel's bulletin is being updated as their team and Mandiant continue their analysis -- check it before you act on anything here.