The Week OAuth Became a Liability: Supply Chains, Biobank Data, and 163 Patches

When legitimate access becomes the attack surface

The Week OAuth Became a Liability: Supply Chains, Biobank Data, and 163 Patches

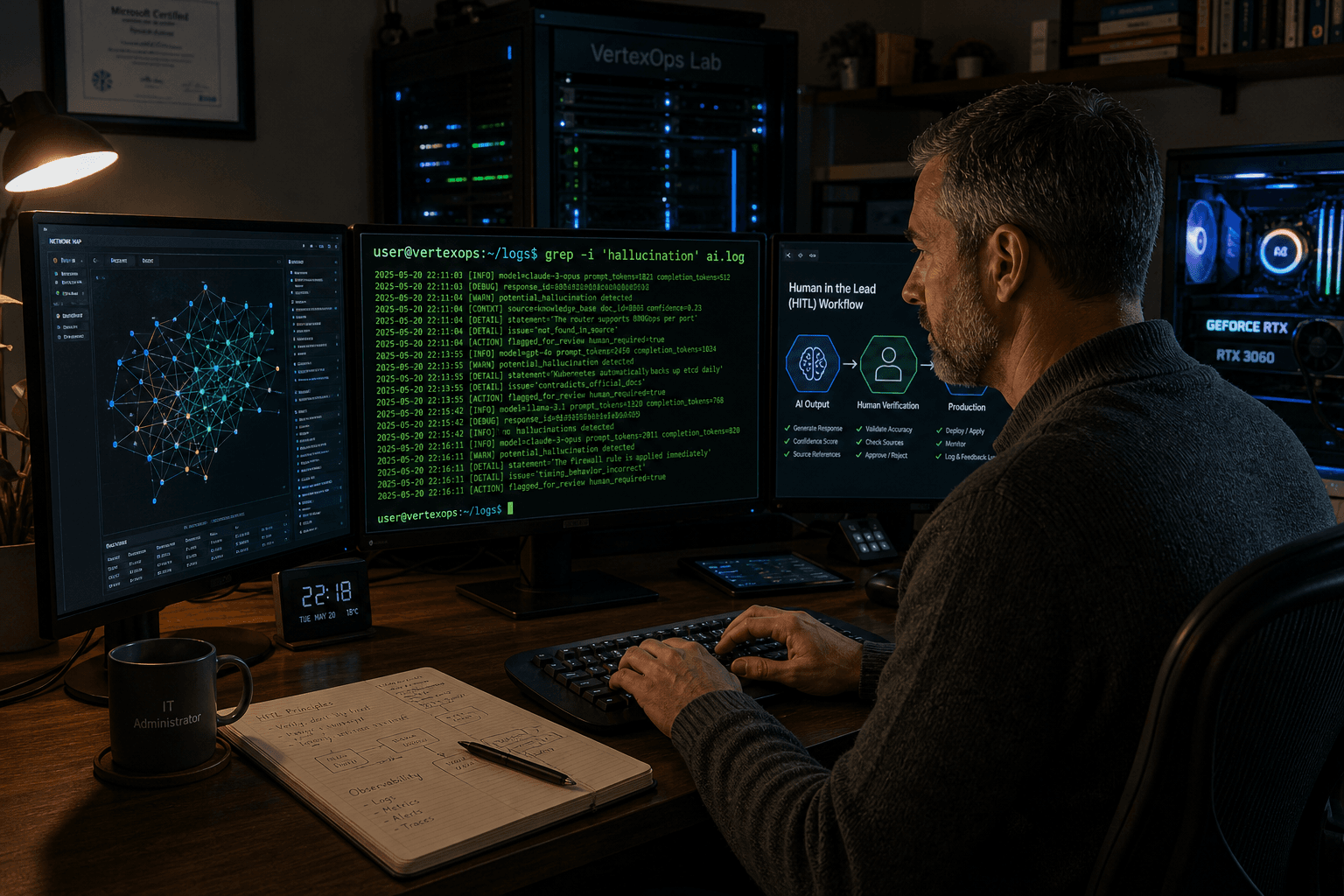

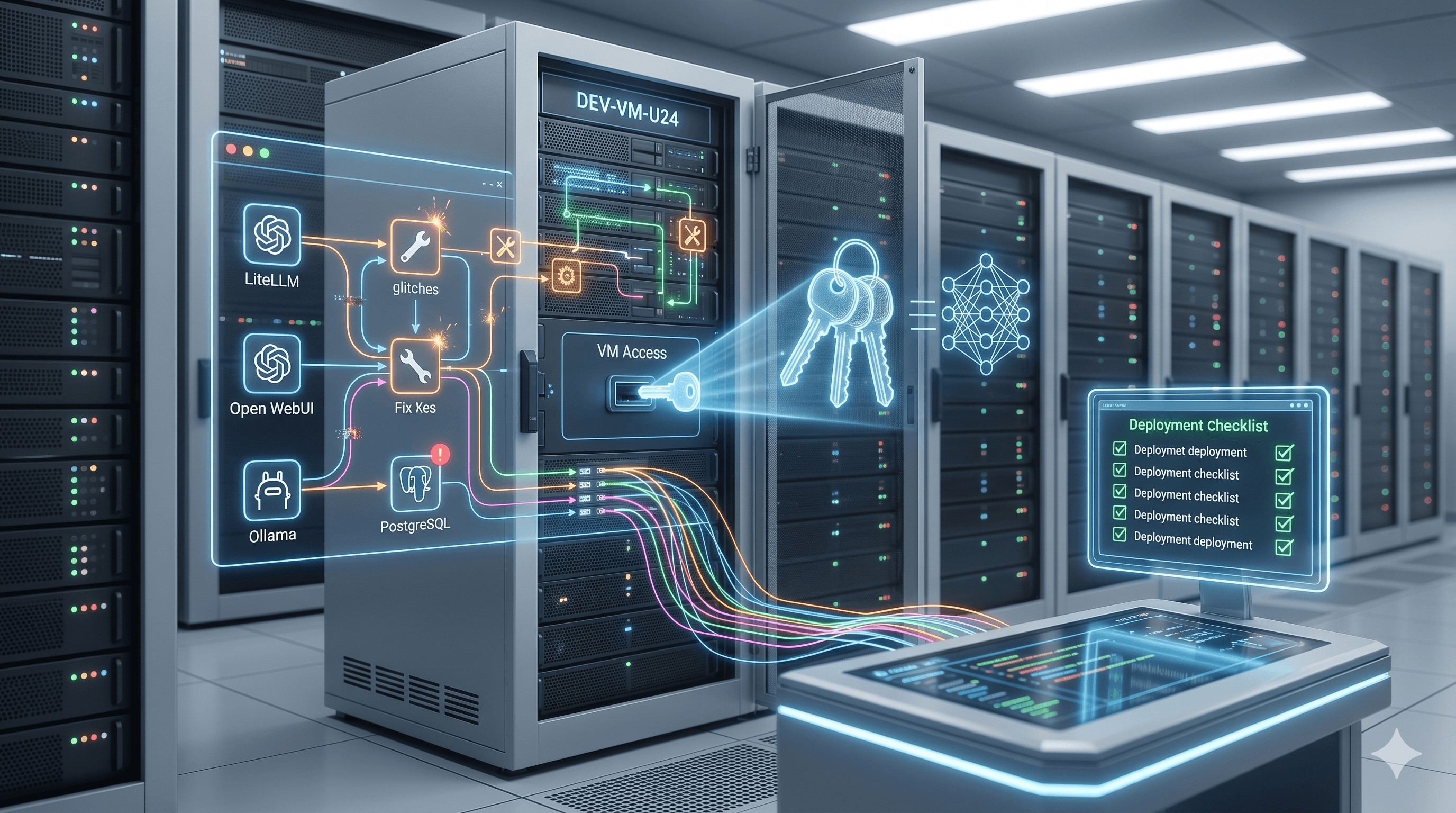

I run a homelab. Ollama, Open WebUI, LiteLLM, all proxied behind Nginx on Ubuntu Server 24.04. I have a handful of third-party integrations connected to various accounts because convenience has a way of winning the argument in the moment.

This past week I went down a rabbit hole auditing every OAuth grant I've got, and it was not a comfortable audit.

Go ahead and do yours too.

Vercel: The Breach That Started with Roblox Cheat Scripts

Somewhere around February 2026, a Context.ai employee downloaded what was probly labeled a Roblox "auto-farm" executor or some similar game cheat. Classic Lumma Stealer delivery vector. That malware quietly exfiltrated credentials, session tokens, and OAuth data off the machine, including access to the support@context.ai account and a pile of enterprise tool credentials: Google Workspace, Supabase, Datadog, Authkit.

Fast forward to April 19. Vercel publishes a security bulletin confirming their systems were breached.

Here's the actual chain: a Vercel employee had authorized Context.ai's "AI Office Suite" product against their enterprise Google Workspace account. The Context.ai Chrome extension (omddlmnhcofjbnbflmjginpjjblphbgk) had already been removed from the Chrome Web Store back in March, but the OAuth trust relationship was still live. Nobody revoked it. Attackers used stolen OAuth tokens to take over the Vercel employee's Workspace account, pivot into Vercel's internal environment, and enumerate environment variables that weren't marked sensitive and weren't encrypted at rest.

Those variables contained API keys, GitHub tokens, and NPM tokens.

Vercel CEO Guillermo Rauch publicly stated he believes the attacker was "significantly accelerated by AI," citing unusual operational velocity and unusual depth of knowledge of Vercel's API surface. Someone on BreachForums claimed to be selling the stolen data for $2 million, framing it as material for what they called "the largest supply chain attack ever." Real ShinyHunters denied involvement. The post was removed. Vercel and their partners (GitHub, Microsoft, npm, Socket) have confirmed no published npm packages were tampered with, but the downstream blast radius for anyone whose environment variables were exposed is still being worked out.

A few things stand out to me here, as someone who spends a lot of time thinking about access controls.

This didn't start with Vercel. It started with one employee at a small AI startup downloading something sketchy. That single infection became the thread the entire incident hangs from. The OAuth grant wasn't exploited in any clever way, it was doing exactly what it was configured to do. Someone with valid tokens used them. The problem was that the trust relationship between a deprecated product and a live enterprise account was never audited or revoked.

Then Vercel's own decision to leave non-sensitive environment variables unencrypted at rest meant that once someone was inside, those variables were just... there.

If you're running Google Workspace: check right now for this OAuth Client ID:

110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com

If it's there, revoke it and start your incident response process. Pull up your Google Workspace Admin console and go through every third-party OAuth application that has grants in your org while you're at it. At work I run this audit periodically alongside our Palo Alto PA-3220 policy reviews, and every single time something turns up that shouldn't still be there. Stale grants with broad permissions sitting untouched for months aren't the edge case, they're what the list actually looks like.

Rotate your keys. Enable 2FA everywhere. Audit your OAuth grants. Not because it's good hygiene to say you did, but because this is literally how Vercel got hit.

UK Biobank: 500,000 Records on Alibaba (And It Wasn't Really a Hack)

On April 23, UK technology minister Ian Murray confirmed to the House of Commons that data from 500,000 UK Biobank volunteers had been listed for sale on Alibaba's e-commerce platform. Three separate listings. At least one covering all 500,000 participants.

Here's the part that's easy to get wrong: this wasn't a breach in the traditional perimeter-breach sense. Three Chinese academic research institutions with legitimate, contractual access to the UK Biobank dataset apparently downloaded the bulk dataset to local storage, and through means still being investigated, that data ended up listed for sale.

UK Biobank confirmed the data was anonymized, meaning no names, addresses, phone numbers, or NHS numbers were included. But the dataset contained genome sequences, MRI brain scans, sleep and diet data, mental health outcomes, and biomarkers. Prof. Luc Rocher from the Oxford Internet Institute noted this was reportedly the 198th known exposure of UK Biobank data since last summer, and pointed out that the data "remains available online for anyone to download today."

Anonymized doesn't mean safe. Rich biological datasets can be re-identified when cross-referenced with other records. Researchers have demonstrated this repeatdly. The "de-identified" label gives institutions a false sense of security about post-custody handling.

The listings were removed before any confirmed sale. The three institutions were banned from the platform.

What bothers me about this one from an infrastructure standpoint is the access model itself. Legitimate access to a dataset does not mean unlimited bulk downloads to local storage with no monitoring of what happens afterward. At SRFECC we deal with data access controls constantly. You can grant someone the right to query data without giving them the ability to siphon the entire dataset to local disk. Contractual obligations mean nothing if you have no technical controls enforcing them, and a lot of organizations treat those two things like they're interchangeable when they're really not.

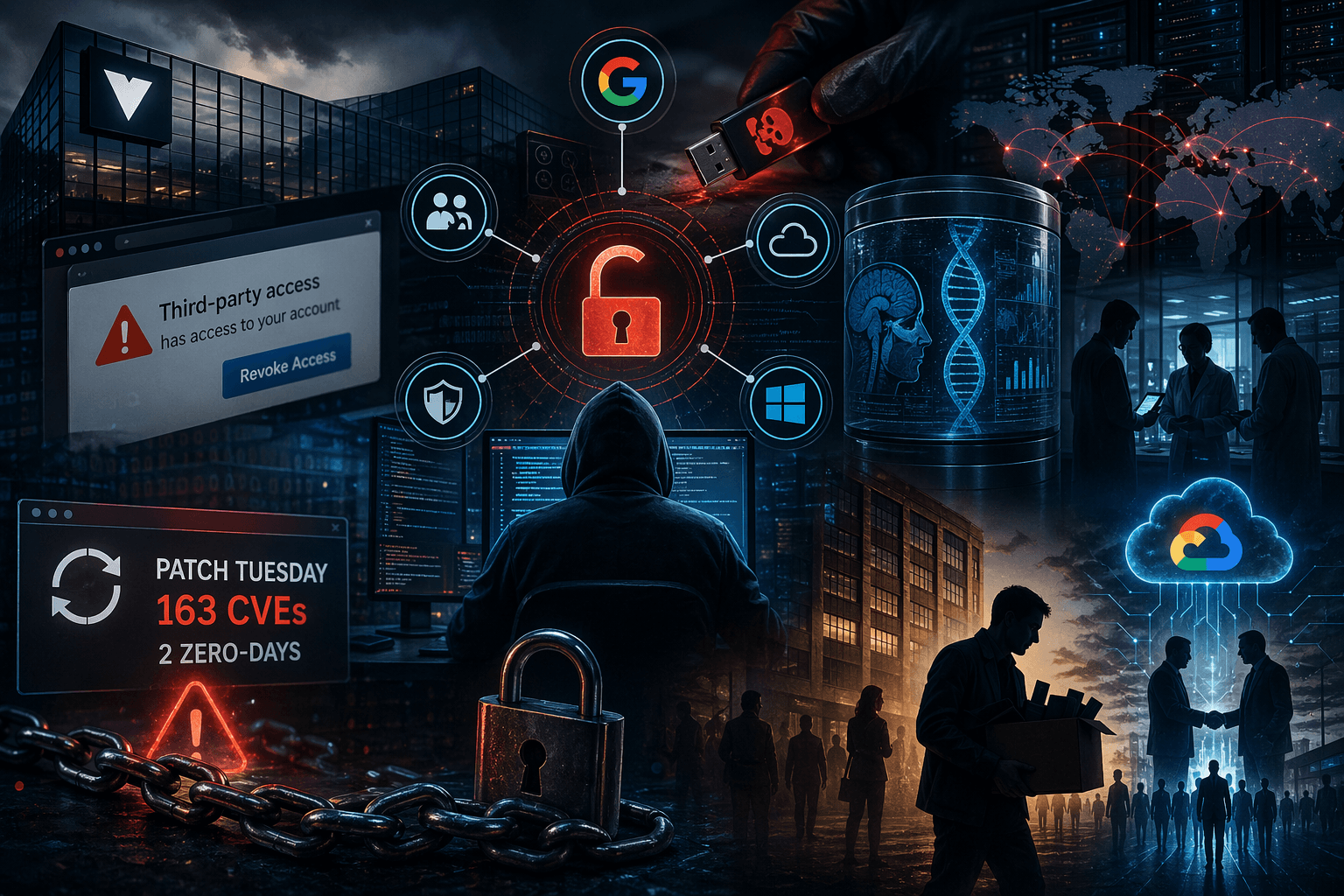

Patch Tuesday: 163 CVEs and Two Zero-Days That Actually Matter

Microsoft's April 2026 Patch Tuesday dropped on April 14 and landed 163 CVEs. Multiple sources are calling it the second-largest monthly release in Microsoft history. Eight rated Critical, most of them RCE. Two zero-days.

The one you need to have already patched:

CVE-2026-32201: SharePoint Server Spoofing (Actively Exploited)

Unauthenticated, network-based attack. No credentials required. If you're running on-premises SharePoint with any internet exposure, this should have been patched the same day it dropped. The CVSS of 6.5 understates the real-world risk considerably given the zero-auth requirement and active exploitation in the wild at time of release.

CVE-2026-33825: Defender Antimalware Platform EoP ("BlueHammer")

Publicly known before the patch landed. Proof-of-concept code was on GitHub since April 3, which is three weeks before Microsoft shipped the fix. Successful exploitation escalates a low-privileged local user to SYSTEM. Defenders update automatically through Defender platform update 4.18.26050.3011, but verify that actually landed across your endpoints rather than assuming it did.

CVE-2026-33824: Windows IKE Service Extensions RCE (CVSS 9.8)

Unauthenticated, exploitable by sending crafted packets to systems running IKEv2. If you have firewall rules already blocking UDP 500 and 4500 from untrusted sources, that's your interim mitigation until you can patch. If you don't, that's the first thing to fix.

One note on sourcing: the article I was rewriting cited a "wormable TCP/IP" CVE (CVE-2026-33827) that I couldn't verify against any primary source. I'm not including it here because I don't have confidence in how it was described, and I'd rather acknowledge the gap than pass along bad informaiton.

The broader context matters here: every single month of 2026 so far has included at least one actively exploited zero-day in Microsoft's release. That's not a coincidence. It's a structural reality that your patch management process either accounts for or it doesn't. If you're deploying critical and actively exploited CVEs on a monthly batch cycle instead of within 24 to 48 hours of release, you're already working behind the attackers.

Microsoft's First-Ever Voluntary Buyout

On April 23, Microsoft announced its first voluntary buyout program in the company's 51-year history. About 7% of U.S. employees are eligible (roughly 8,750 people), using a "Rule of 70" formula: years of service plus age must equal 70 or more, senior director level and below. Eligible employees have until May 7 to receive details and 30 days to decide.

It's framed as voluntary, and it is. What makes it interesting is the timing: this is landing while Microsoft simultaneously pours billions into AI infrastructure and holds hiring freezes across non-AI parts of the business. Those two facts belong in the same sentence.

The same pattern is showing up everywhere. Meta cutting 8,000 jobs. Oracle reducing 30,000 roles. The common thread is what some analysts are calling "skill mix" restructuring: shedding roles that don't map to agentic AI and automation buildout, redirecting that capital toward the work that does.

Not going to moralize about it. That capital is going where it's going. What I will say to people working in IT and infosec: right now, knowing how to build and operate AI infrastructure, not just consume AI output, is where the demand is pointing. That gap between the two is where the interesting work is.

Google's $750M Partner Fund

At Cloud Next 2026 in Las Vegas on April 22, Google Cloud announced a $750 million fund directed at its 120,000-member partner ecosystem. Consulting firms, systems integrators, software partners, specifically to help them build and deploy agentic AI solutions on Google Cloud.

To be clear about what this actually is: it's not Google building agents. It's Google funding Accenture, Deloitte, PwC, Capgemini and others to build agents for enterprise customers on Google's infrastructure. Embedded forward-deployed engineers from Google, early Gemini model access, sandbox credits for prototyping.

The strategic logic is pretty straightforward. Google has the platform. The consulting firms have the enterprise client relationships. Google is paying to make sure those firms choose its stack when they go to deploy.

The Datadog State of AI Engineering 2026 report puts 69% of companies now running three or more AI models simultaneously. That number makes the whole "agentic factory" framing land differently. It's not about one model doing one thing. It's about orchestrating multiple models across workflows, which is the infrastructure problem Google and its partners are betting they can own at scale.

Where This Actually Lands

None of these stories are isolated.

Vercel got breached because a third-party AI tool had OAuth grants nobody audited. Half a million UK health records ended up on Alibaba because legitimate access wasn't paired with technical controls over what that access could do. Microsoft is patching at a scale that requires the second-largest monthly release in company history, and attackers are weaponizing zero-days before patches even land. Meanwhile the companies that make the tools we all use are restructuring toward AI infrastructure as fast as they can.

The surface area is growing. The trust relationships are multiplying. The controls aren't keeping pace.

For me, that means spending some time this weekend going through every OAuth grant in my homelab and at work, making sure nothing is sitting there with broad access that nobody has thought about in six months. It also means treating Patch Tuesday like it has a 48-hour deadline, not a 30-day one.

Probly a good time for all of us to do the same.