Why I am Moving my AI "Agents" to the Edge (and Why You Should Too)

The "Offline" Advantage for First Responders

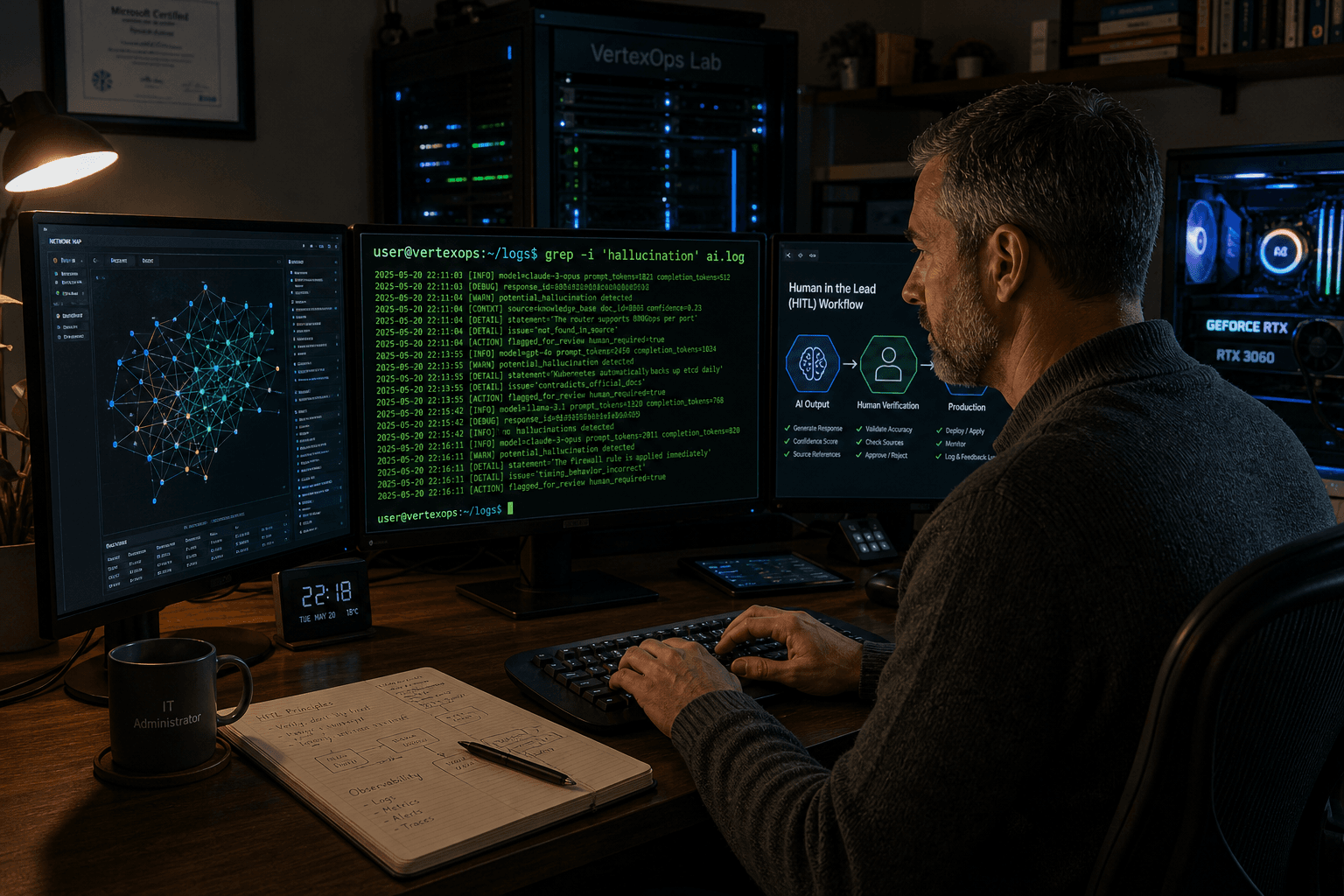

I have been watching the "Agentic AI" trend blow up on Hashnode lately. It seems like every other post is about how AI is moving from just answering questions to actually doing things—writing code, managing QA, and even handling incident triage. It is exciting stuff, but as someone who works in public safety, my first thought is always the same: What happens when the cloud goes dark?

In my line of work, we talk about "resilience" a lot. Whether it is my day job or volunteering with Sacramento CERT, you learn pretty quickly that if your tools depend on a perfect internet connection and a third-party server's uptime, you do not actually own those tools.

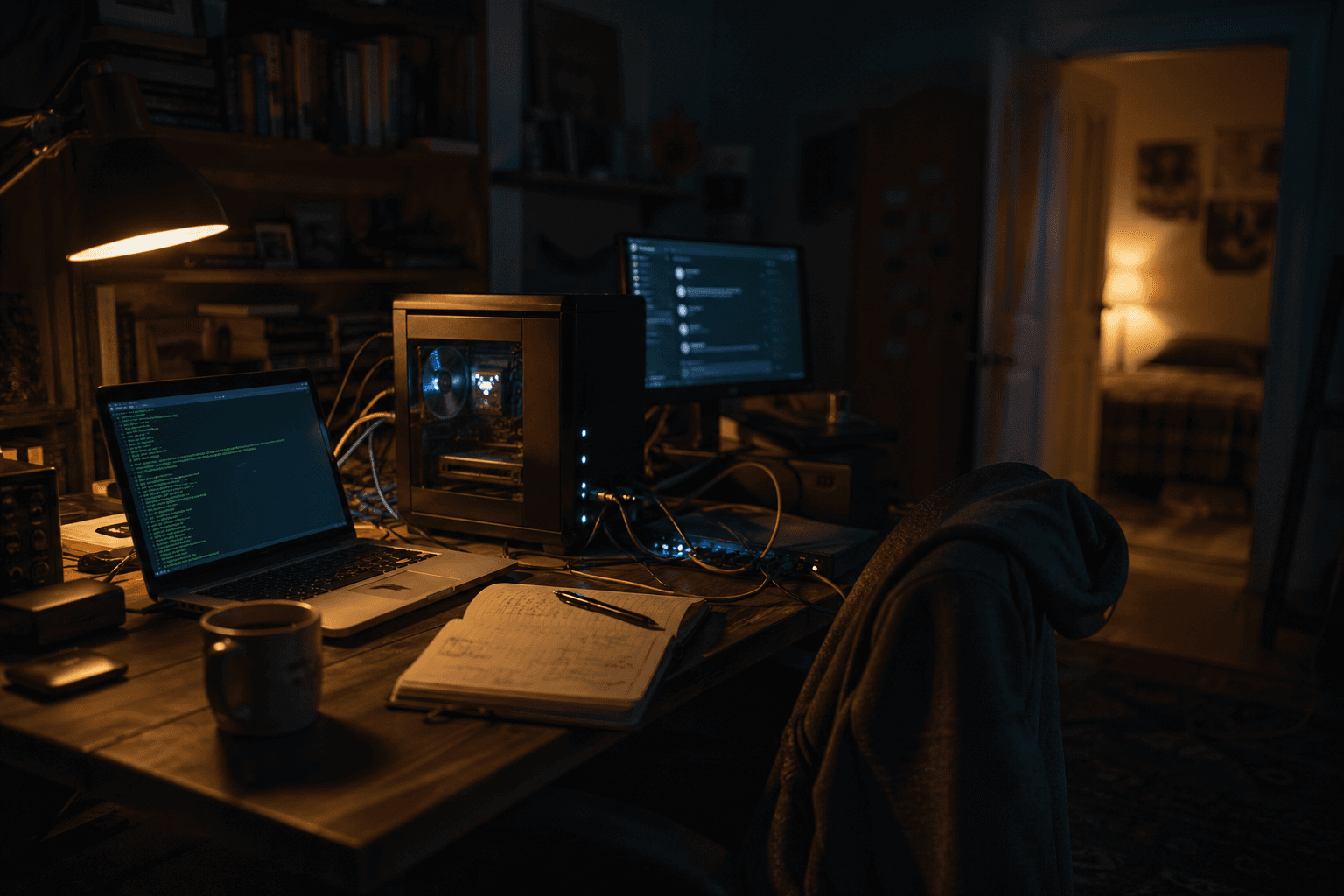

That is why I have been spending my nights in my home lab (shoutout to my trusty Dell T3610) moving away from the "cloud-first" mindset.

The Shift to the Edge

With the release of Gemma 4 and Qwen 3.5, the gap between "cloud AI" and "local AI" has basically evaporated for most practical tasks. I have been testing these models via Ollama, and the performance on consumer-grade hardware is getting insane.

Here is why this matters for those of us building infrastructure:

Privacy is non-negotiable: If you are working with sensitive data—whether it is public safety info or just your own personal projects—sending that to a proprietary cloud model is a risk. Keeping it local means you keep the keys.

True Resilience: If the grid goes sideways or the fiber gets cut, my local LLM keeps running. For an "Agent" to be useful in a real emergency, it has to be reachable.

Latency: When you are running a local model on your own metal, you are not waiting on API calls or rate limits. It just works.

What is in my Stack?

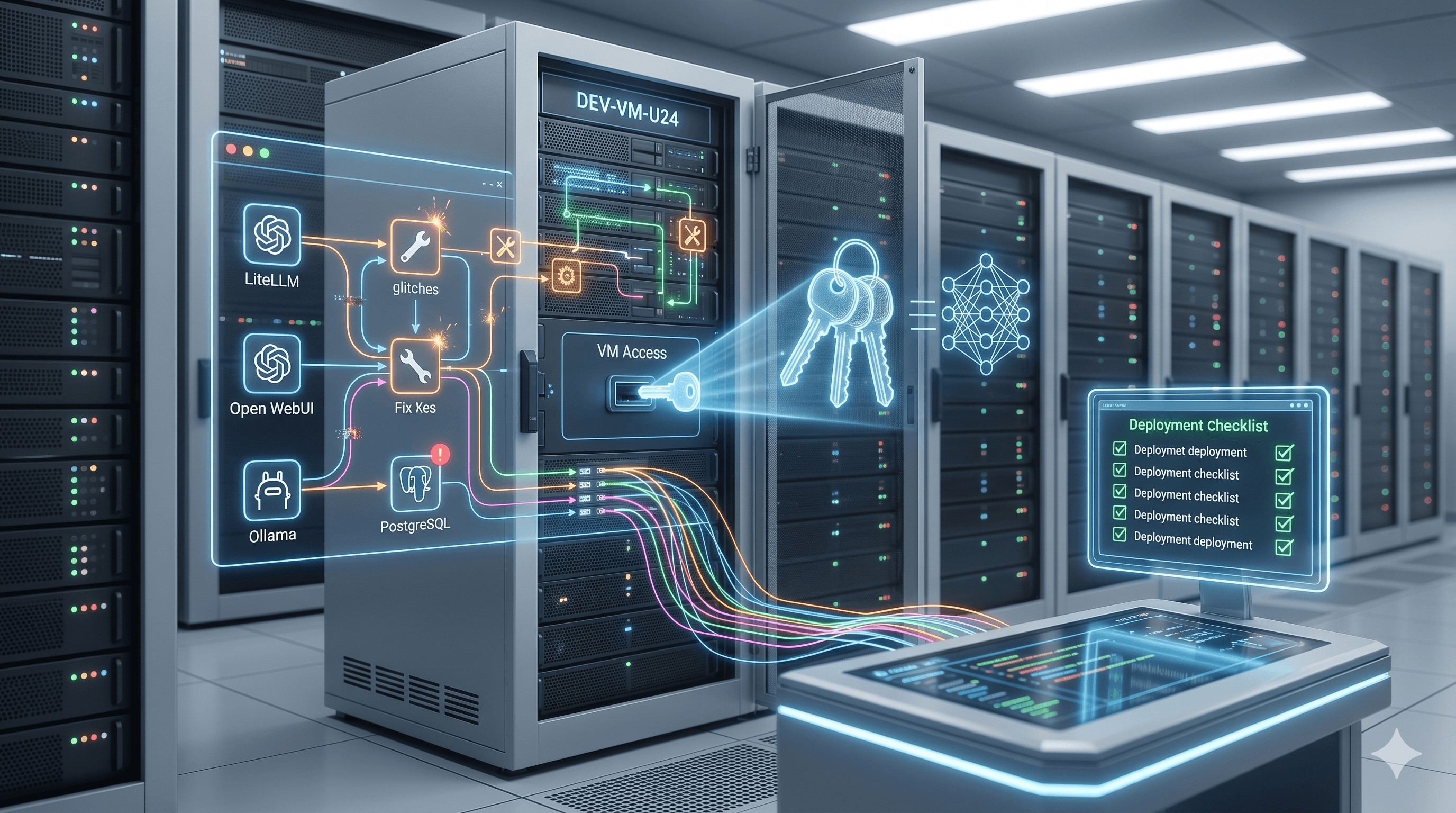

I am currently leaning heavily on a self-hosted setup that looks something like this:

Hypervisor: VMware ESXi 8 (standard stuff, but rock solid).

Model Runner: Ollama, pulling the latest Qwen and Gemma weights.

Orchestration: Exploring how to use these local models for basic "agentic" tasks like automated log analysis and system hardening.

Why this matters

I have always liked platforms that focus on community and shared knowledge. The tech sector needs more of that "civic" mindset. We should be building systems that empower people, not just systems that make us dependent on a few giant corporations.

If you are just starting with local LLMs, my advice is to stop worrying about the benchmarks and just start building. Setup an old workstation, install Linux, and see what you can make it do without an internet connection. You might be surprised at how much power you actually have sitting under your desk.

I am curious—how many of you are actually running your "Agents" locally vs. relying on Claude or GPT-5? Let’s talk about it in the comments.